Model - Entity

Navigate to the Advanced Options pane on the left side of your screen. On the top of Advanced Options, navigate to the Model - Entity tab.

What are Entities?Entities are the gold-layer tables that are generated during the model building process. You can built any number of entities during a model build. Entities can be built using data from any bronze or silver table in your data estate. They can even be built using other gold-layer entities!

Know your Staging Notebook Locations!This section assumes you have your staging notebooks written and locations to those notebooks are known. We will be configuring those locations to the entries in the Entities table, so have those on hand.

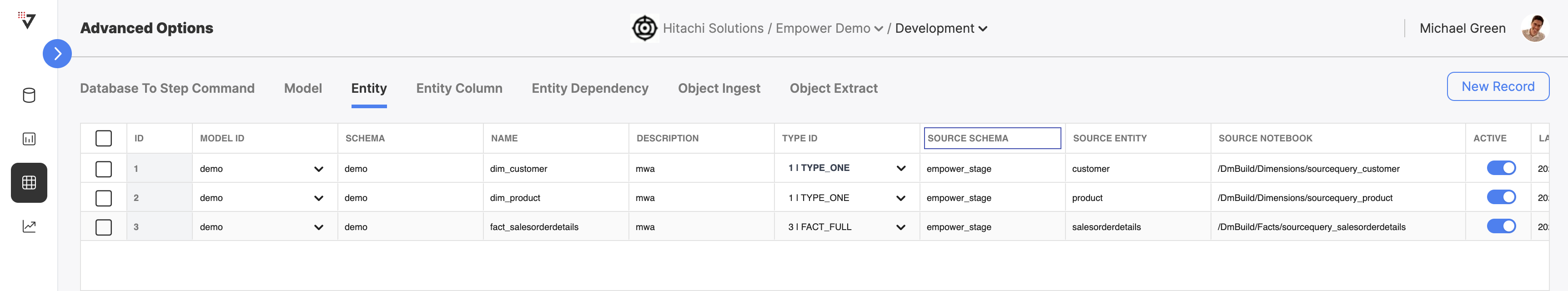

The Entity table defines the list of dims and facts for all your models. For each Entity, you can view the model this entity belongs to, its schema, the name of the entity and description, its type (a various assortment of dim and fact types), the source schema and source entity, and the staging notebook that this entity is build within. You can activate and deactivate any entity on demand. Deactivated entities will not be built during a model build process.

The Entity table, where you can view information about every entity.

Below is a table describing each of the Entity fields and example values.

| Field Name | Description | Example Value |

|---|---|---|

| ID | The unique generated ID for this entry (autogenerated). | 1 |

| Model ID | The model this entity is attached to. When the model is triggered, this entity will be built with it, if both are active! This must be an existing model in the Models table. | financial-model-division-a |

| Schema | The destination schema for this entity, i.e. which schema will this entity "live within" after being fully built. | empower-finance |

| Name | The name for this entity, i.e. what will this table be called. The newly built entity will "live within" this table after being full built. | dim_product |

| Description | A short description of this entity and what its for. | the product dimension for empower-finance, division A |

| Type ID | The type for this entity, can be any one of several dim and fact types | TYPE_ONE |

| Source Schema | The schema that the source notebook will write to and model builder will read from. | empower_stage |

| Source Entity | The table that the source notebook will write to and mode builder will read from. | product |

| Source Notebook | The location in DBFS where the staging notebook for this entity is. This is the notebook that will be run during the build phase for this entity, and the results placed in [schema].[name] | /DmBuild/Dimensions/sourcequery_product |

| Active | A toggle to activate/inactivate this entity | On |

| Last Modified | An uneditable field that displays the last time this entity was updated | 2023-08-23 19:09 (UTC) |

Filtering

You can filter Entity by Model for easy navigation.

Use these filters to quickly scope your view to a specific set of assets.

Creating an Entity

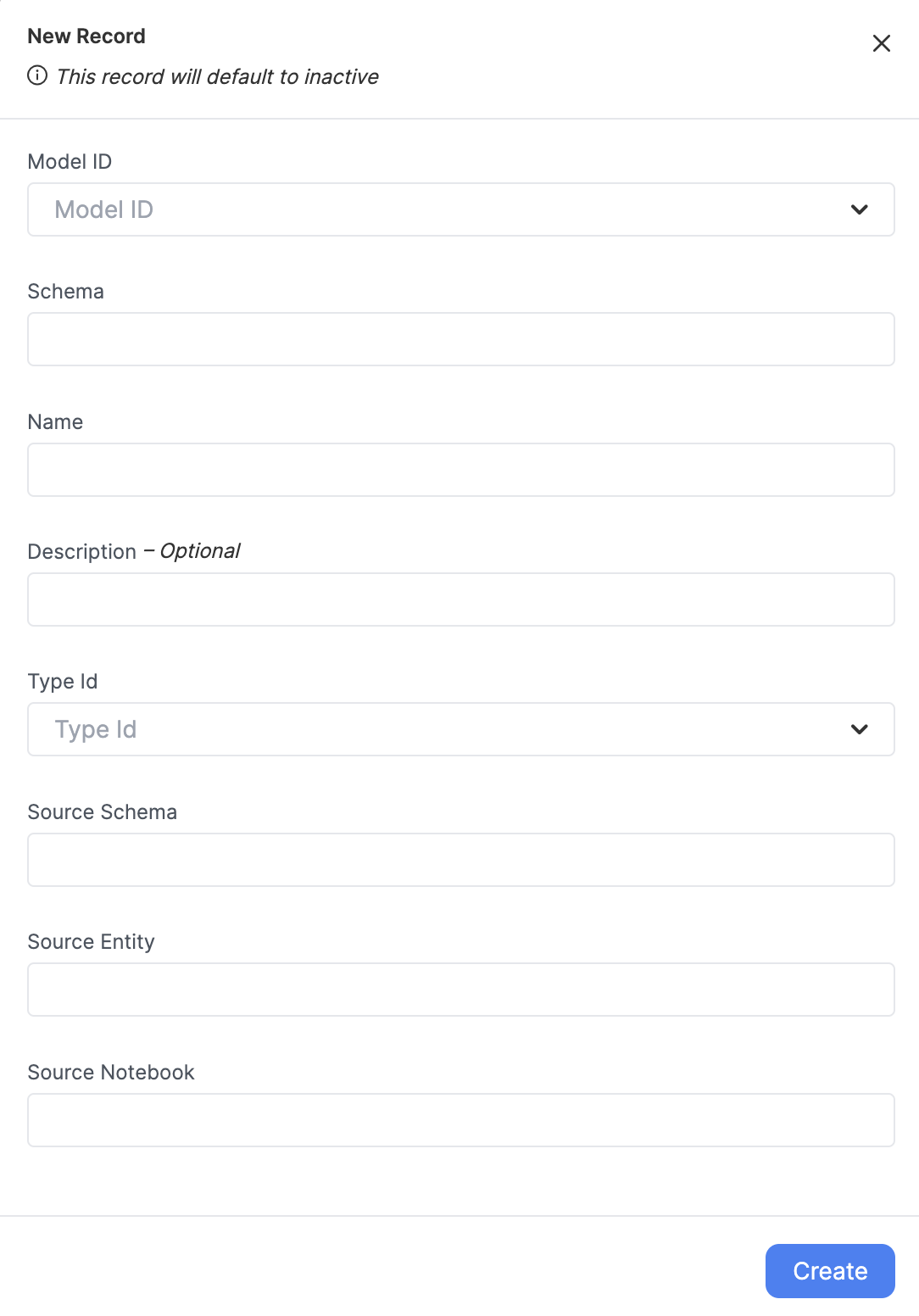

Create a new entity by clicking on "New Record". This will bring up the new Entity creation modal.

The new entity creation modal.

Entities Default to InactiveAfter creating a new entity, you will have to toggle it on for it to be built during the model building phase. All entities default to inactive state upon creation.

After filling out fields, clicking "Create" will result in a new entry in this table. Create an entry for each of your staging notebooks/entities in your model.

Activate your Entity!Make sure to toggle the Entity ACTIVE in order to start using it. Inactive Entities will not be built by the Empower system.

Entity Types

Entities can be several types, depending on their use and what kind of historical tracking is needed.

| Type Name | Description |

|---|---|

| Type One (Dimension) | New versions of existing data points will overwrite the existing data points. Table is built as a full load (not incrementally). |

| Type Two (Dimension) | New versions of existing data points will be inserted as new data points and flagged as the current version. Table is built as a full load (not incrementally). |

| Fact Full | A generic fact table. Table is built as a full load (not incrementally). |

| Type One Incremental (Dimension) | New versions of existing data points will overwrite the existing data points. Table is built incrementally. |

| Type Two Incremental (Dimension) | New versions of existing data points will be inserted as new data points and flagged as the current version. Table is built incrementally. |

| Fact Incremental | A generic fact table. Table is built incrementally. |

Understanding Full and Incremental Load Types

When building a model, it's essential to understand the difference between full and incremental load types. This section provides an overview of these two types and their use cases.

Full Loads

A Full Load involves loading all the data from the source to the destination each time the data is processed. In other words, the entire dataset is reloaded, regardless of whether the data has changed or not. This method can be a good choice when:

- The dataset is small, and the time required to process and reload the entire dataset is relatively short.

- The source data does not have a way to track changes or does not support incremental loads. **

- The latency will be roughly equal in both full and incremental, up to the processing time. Incremental will be more "real-time" in most cases.

However, Full Loads can be time-consuming and resource-intensive, especially when dealing with large datasets or frequent updates.

Incremental Loads

Incremental Load, on the other hand, only processes and loads new or modified data since the last load. This method is more efficient, as it reduces the amount of data being transferred and processed. It can be a good choice when:

- The dataset is large, and processing the entire dataset takes a long time.

- The source data has a method to track changes, such as a modified date or timestamp. **

- The data warehouse or destination can tolerate some delay in reflecting the latest changes.

Incremental Loads may require additional logic and setup to identify and process only the new or modified data. They may also require monitoring and handling of potential data conflicts or issues that may arise when merging the new data with the existing data.

Updated 7 months ago